Knowledge base

Datastore management

Part of the power of SyncHub, is the ability to automatically map your API endpoints into a relational database. All of this happens behind-the-scenes, so you don't need a DBA on staff in order to use our service - just click and go!

However, databases are sophisticated, and there is a lot of additional configuration available to those who need it. For this, we have our Datastore Management module.

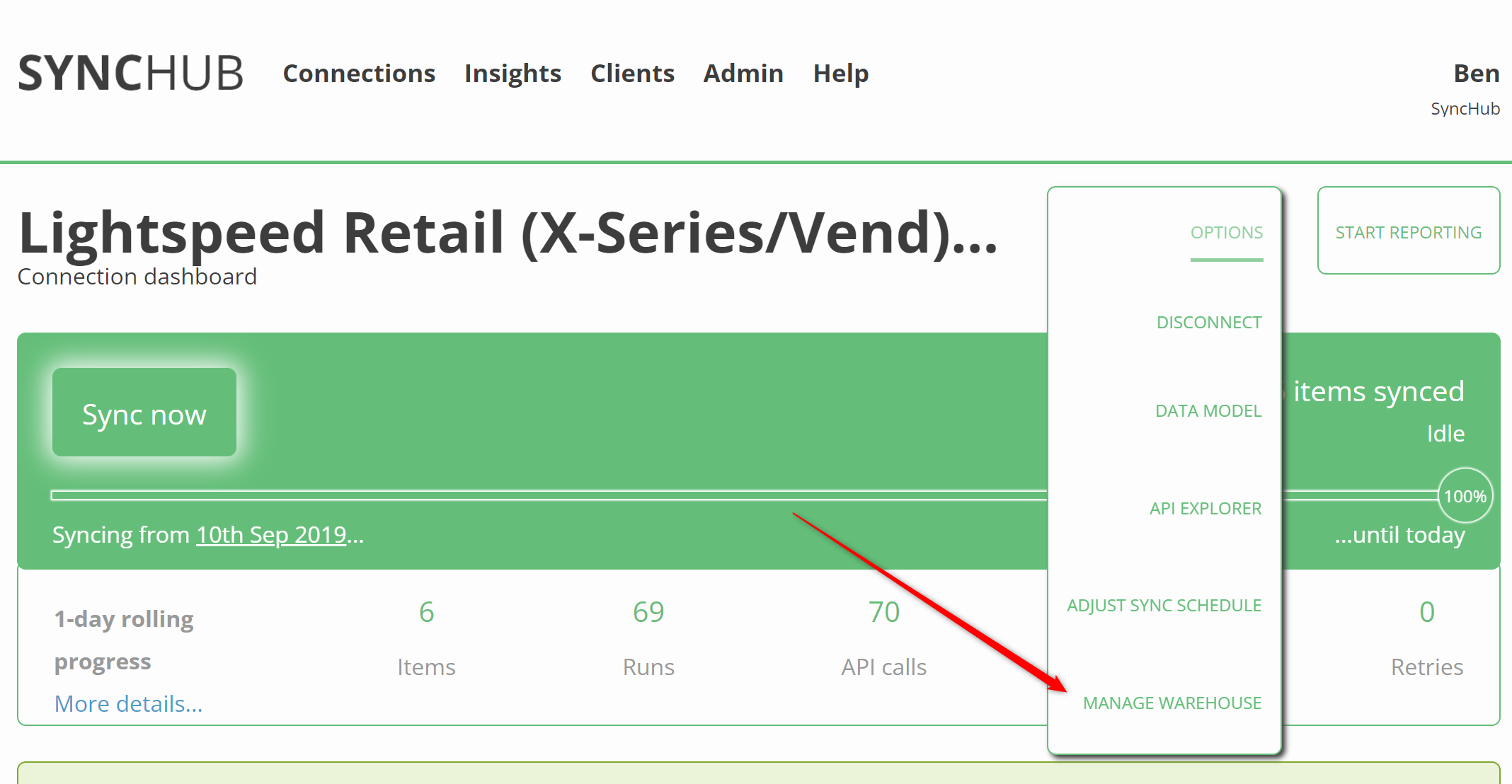

To access the module, simply open your Connection Dashboard, then select Options - Manage data store from the main menu:

Foreign keys

Create foreign keys in your data store, to mirror the relationships we have mapped in your data model. Creating foreign keys allows your reporting tool to make smarter suggestions when building your reports

Index management

Increase the performance of your reports, by creating indexes on key columns

Firewall security

Use our Firewall module to restrict access to your data store, based on IP address

Performance cache

The SyncHub cache drastically improves the performance of your data sync, but sometimes you need to disable it for things like GDPR or general compliance.

Ingestion mode

If you are using BigQuery, Redshift or Snowflake, you have an option to change the Ingestion mode of your data stream.

1. Normalized

Changes to your data result in modifications to the corresponding record in your data store, according to the RemoteID column. This results in a properly normalized database, with a single record reflecting the corresponding item in your cloud service, however it may be somewhat slower to write your data

select tbl.*

from Client tbl

limit 50

2. Append Only

All modifications to your records are recorded with new INSERTs to your data store, meaning the unique ID of your records becomes a composite of RemoteID/WhenUpsertedIntoDataStore. This is recommended for large and/or frequently changing data stores as it is highly performant, however it takes up a lot more room in your data store

with lastUpserts as (

-- Our BQ connector is insert-only. Changes to records are appended to the data set, with an updated 'WhenUpsertedIntoDataStore' timestamp

-- So what we do here is grab the latest version of each record, by grouping our unique key (RemoteID) by the max timestamp value...

select max(WhenUpsertedIntoDataStore) as WhenLastUpsert, RemoteID

from Client

group by RemoteID

)

-- ...then we just join back to these latest timestamps using a regular JOIN statement

select tbl.*

from Client tbl

inner join lastUpserts on (

tbl.WhenUpsertedIntoDataStore = lastUpserts.WhenLastUpsert

and tbl.RemoteID = lastUpserts.RemoteID

)

limit 50